AI Focus Has Moved from Building Models to Running Them

For years, the AI industry focused on training, assembling massive GPU clusters to build ever-larger language models. That era is shifting. The frontier models are trained. The race now is to run them, to put them in front of millions of users, thousands of applications, and increasingly complex tasks. Running a trained model, feeding it a question and getting an answer back, is called inference.

The shift is already showing up in spending. Gartner projects that inference will surpass training as the dominant AI infrastructure workload in 2026, with inference-focused spending reaching $20.6 billion, up from $9.2 billion in 2025. By 2029, more than 65% of all AI-optimized infrastructure spending will support inference. The money is following the workload.

Inference looks simple from the outside. A user types a prompt. The model responds. But underneath, inference has become something the original storage infrastructure was never designed to handle. And the reason comes down to one thing: memory.

The Working Memory Problem

When a model processes a conversation or supports an agent, it builds a running record of everything it has seen so far. Every word, or more precisely, every "token" (a fragment of text that the model processes as a single unit), gets converted into mathematical values that the model uses to track context. This running record is called the key-value cache, or KV cache. Think of it as the model's short-term working memory. It is what allows the model to remember the beginning of your conversation by the time it reaches the end of its answer.

Why the KV Cache is Growing

Until recently, most production inference was straightforward. A prompt went in. A response came out. The KV cache was small, the interactions were short, and GPU memory was sufficient.

Today's AI applications are not single-turn question-and-answer tools. A coding assistant works through a problem across dozens of exchanges, each building on everything that came before. A research agent might spend hours reasoning through a complex analysis, accumulating millions of tokens of context. Agentic systems that take multi-step, multi-agent actions on behalf of users, maintain reasoning chains that grow with every step. In addition, an agent might load a large book, a dozen research papers, or 100,000 lines of code as part of the process. The KV cache for these workloads is not megabytes. It can be 100s of gigabytes per session. And the sessions do not end quickly.

Why GPU Memory Becomes a Key Design Consideration

All of that working memory has to live somewhere. The fastest place is the high-bandwidth memory (HBM) built directly into the GPU chip. An NVIDIA Hopper GPU has 80 GB of it. An NVIDIA Blackwell GPU has 192 GB. That is a significant amount and is enough to support standard AI chats, but a single long-context agent session can consume 32 GB. At thousands of concurrent users, GPU memory utilization can increase quickly.

When it fills, the system has two options: discard the working memory and rebuild it the next time it is needed (called recomputation), or stop scaling. Recomputation is expensive and inefficient. Every evicted KV cache block that gets rebuilt is GPU compute spent on work the system already did, wasting throughput and increasing latency.

Performance Gains Increase Data Demand

Every new generation of hardware produces and consumes KV cache data faster. A GPU that generates tokens at twice the speed needs its context data delivered at twice the speed, or it stalls waiting. Every gain in token generation speed intensifies the demand on the storage infrastructure underneath.

Agentic AI is no longer just a compute challenge. It is a KV-cache storage and data-movement problem. The GPU hardware is fast enough. The storage infrastructure has not kept pace with the shift from training to inference, and the shift from simple prompts to complex, stateful agents.

Solving it requires extending GPU memory beyond the GPU itself, into storage tiers that can persist context, share it across nodes, and deliver it back at speeds that keep GPUs productive. But a memory hierarchy that spans GPU HBM, host DRAM, local flash, and shared network storage does not manage itself. Something has to decide what context stays hot, what gets offloaded, where follow-up requests should be routed, and how data moves between tiers without stalling inference. That coordination layer, called orchestration, is the missing piece between fast hardware and fast inference.

From Problem to Solution: NVIDIA Dynamo

NVIDIA Dynamo is an open-source framework that coordinates inference across GPU clusters. It reached version 1.0 at GTC 2026 and has already been deployed in production by organizations including CoreWeave, DigitalOcean, PayPal, Pinterest, and Perplexity. Dynamo wraps around popular inference engines (vLLM, SGLang, TensorRT-LLM), so teams keep the tools they already use. Dynamo handles the orchestration on top. Three components matter most for maximizing inference performance and efficiency.

Disaggregated Prefill and Decode

Inference has two phases. "Prefill" is where the model reads the entire input prompt and builds the KV cache. It is compute-intensive. "Decode" is where the model generates its response one token at a time by reading from that KV cache. It is memory-bandwidth-intensive. These two phases have fundamentally different hardware requirements.

Dynamo can split them onto separate GPU pools, each independently scaled, and this is called disaggregated inference. In industry benchmarks, this architectural separation delivered up to 7x throughput improvement on NVIDIA Blackwell hardware. Not faster silicon. Better architecture.

KV Block Manager (KVBM)

KVBM solves a memory overflow problem. When GPU memory fills up, instead of discarding KV blocks and forcing recomputation later, KVBM migrates cached blocks that are not a part of active generation to a lower tier: host DRAM, local flash drives, or shared network storage. When the model needs that context again, KVBM retrieves it. Dynamo 1.0 added S3 API support for the storage tier, meaning the KV cache can be offloaded to any S3-compatible object store. KVBM also enables KV-aware routing, sending follow-up requests to the GPU that already holds the relevant cache.

The impact is significant. NVIDIA has demonstrated that KV cache reuse from storage can accelerate time-to-first-token (the delay before the model starts responding) by up to 27x compared to full recomputation, with even larger gains at longer context lengths. The principle is straightforward: every cache block retrieved represents a recompute operation that did not have to occur, the result: GPU compute returned to productive work. The GPU is not made faster. It is made available more often.

NVIDIA Inference Xfer Library (NIXL)

NIXL is the data transport layer. It efficiently moves KV cache between memory tiers that are not on the local node using RDMA (Remote Direct Memory Access), a networking technology that lets one machine read directly from another machine's memory without involving the operating system. NIXL operates at sub-millisecond latency. All major inference engines integrate with it. Storage vendors plug in through native NIXL plugins. Inference engines and storage vendors use a common interface. Everything works the same regardless of which inference engine or storage solution you choose.

Dynamo solves orchestration. But orchestration is only as good as the infrastructure underneath it. The software can decide what data moves where, and NIXL makes that data movement efficient at the software layer, but the hardware has to deliver it at speeds that keep GPUs productive. That is the role of the storage architecture.

The Hardware Layer: NVIDIA STX and the Memory Hierarchy

At GTC 2026, NVIDIA introduced NVIDIA STX, a modular reference architecture that defines how storage infrastructure should be built for AI inference at scale. The NVIDIA Vera Rubin platform includes an STX rack populated with NVIDIA BlueField-4 STX servers, each featuring the NVIDIA BlueField-4 DPU, a powerful storage processor that sits directly in the network path between GPUs and storage drives and runs the NVIDIA DOCA Memos framework, alongside NVIDIA ConnectX-9 SuperNICs, and NVIDIA Spectrum-X Ethernet. By bypassing the host CPU entirely, data moves from flash storage to GPU memory without the traditional layers of operating system and software overhead that add latency at every step. NVIDIA's benchmarks show STX delivering up to 5x token throughput, 4x energy efficiency, and 2x faster data ingestion compared to traditional storage architectures.

The KV Cache Memory Hierarchy

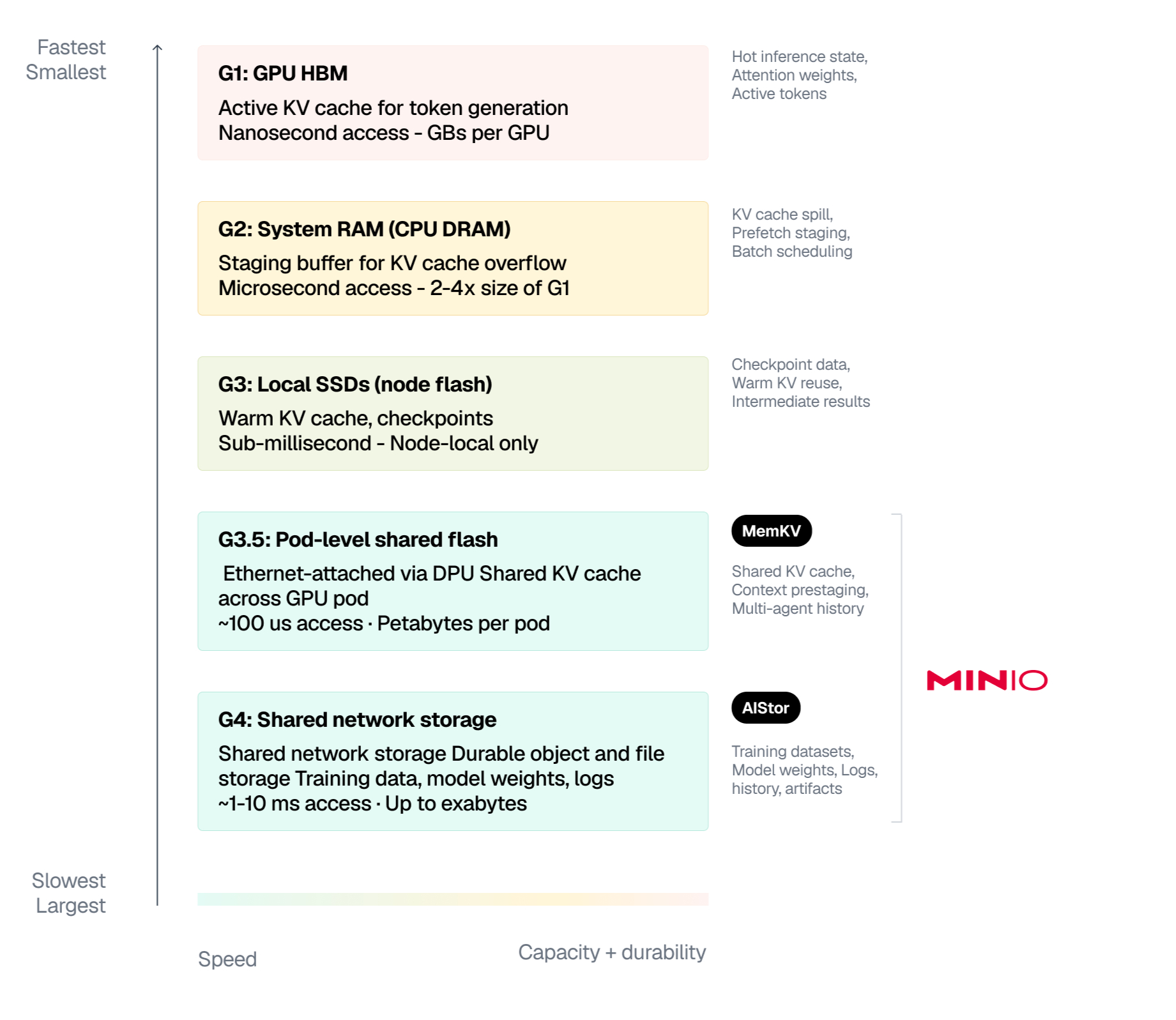

The modern inference stack formalizes the memory tiers (G1-G4) that inference workloads require and gives each tier a defined role. Think of it like the memory hierarchy in a personal computer: the processor has a tiny amount of ultra-fast cache, then there is RAM for active programs, then a hard drive for everything else. The same principle applies here, at data center scale.

Where MemKV Fits

What separates MemKV from every other storage vendor in the ecosystem is G3.5. Because MemKV runs natively on ARM64 and requires no external metadata databases or background services, it runs directly on NVIDIA BlueField inside the fabric. G3.5 is where KV cache gets staged back into GPU memory ahead of the decode phase so GPUs never stall waiting on storage. That is where inference performance is won or lost.

Conclusion

The inference stack is being redesigned around a memory hierarchy that extends from GPU memory to shared network storage. NVIDIA Dynamo provides the orchestration. NVIDIA BlueField-4 STX provides the reference architecture. MinIO MemKV provides the context memory tier at G3.5. Every KV cache block retrieved instead of recomputed is GPU compute returned to productive work.

Gartner forecasts global AI spending at $2.52 trillion in 2026, with AI infrastructure alone accounting for $401 billion. The organizations building purpose-built inference infrastructure now will be running production AI at scale while everyone else is still running proofs of concept.

NVIDIA Dynamo for orchestration. NVIDIA BlueField-4 STX for the reference architecture. MinIO MemKV for context memory that runs inside the fabric, not beside it. Build accordingly.

Resources

- You can get access and learn more here.

- Introducing MinIO MemKV (launch blog)