Cloud analytics has become the standard for enterprise AI and advanced data science, with Databricks at the center of that shift. It powers large-scale analytics, machine learning, and real-time intelligence in the cloud for many of the world’s largest organizations.

However, speed depends on access to data, and in many enterprises the data that fuels those workloads still resides on-premises. This is not accidental. Scale, performance requirements, cost, regulatory obligations, and operational realities often require high-value data to remain on-premises.

This creates a persistent divide between cloud compute and on-premises data. Historically, making that data available to Databricks required copying, staging, and synchronizing it into the cloud before analysis could begin, in cases where moving the data to the cloud was even feasible. That process introduces delay before the first query ever runs, and as data volumes grow those delays compound. Insights that should arrive in seconds can take hours or longer. Increased costs and operational overhead inevitably follow.

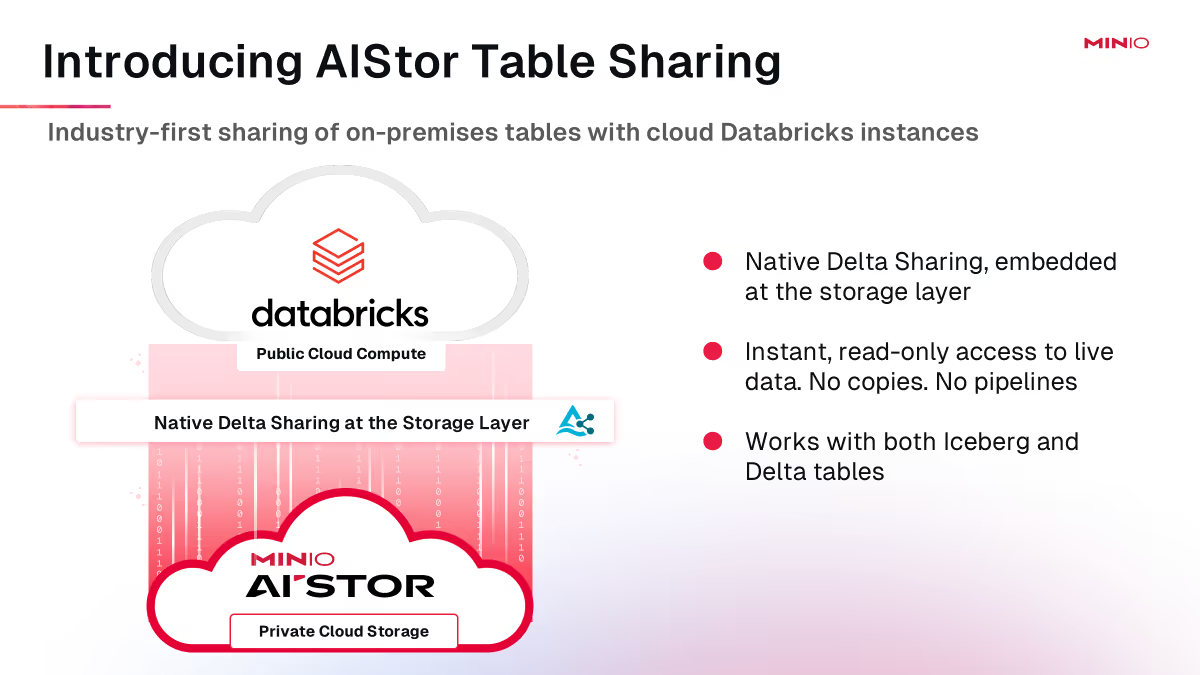

AIStor Table Sharing removes that constraint. By natively integrating the Delta Sharing protocol into AIStor, MinIO enables Databricks to access live, governed on-premises data without replication or additional infrastructure. Compute remains in the cloud while data remains where it makes the most sense, and queries run against current data rather than stale copies.

This reflects how modern enterprises now operate. By embedding data sharing directly into the data foundation, AIStor ensures that cloud analytics can scale without being constrained by data movement, latency, or duplication.

The Hidden Cost of “Just Move the Data”

The delay created by copying data is only the most visible symptom. The deeper issue is structural.

For years, enterprises have bridged the gap between cloud analytics and on-premises data by replicating datasets into new environments. They copy data into cloud storage, synchronize it across platforms, and maintain parallel versions so downstream tools can consume it.

At small scale, this appears manageable. At enterprise scale, small inefficiencies compound quickly.

Every copy multiplies storage cost and compliance exposure. Every synchronization job adds operational overhead and increases the risk of drift between systems. Each new analytics initiative expands the surface area that must be governed, secured, and maintained. As data volumes grow, so does the complexity required to keep those copies aligned.

The result is an architecture that becomes more fragile as it becomes more valuable. What began as a workaround evolves into an ongoing tax on innovation.

When insight depends on replication, success increases both risk and cost.

A Quiet Shift in How Data Is Shared

A different approach has been taking shape. Delta Sharing, introduced by Databricks in 2021, is an open protocol for securely sharing live tables without copying data. It decouples data ownership from data consumption. Teams can access the data they need with the tools they prefer, while data remains governed at the source.

This is an important step forward. But in most deployments, Delta Sharing still lives outside the core of the data stack. It often requires a standalone sharing server, additional services for authentication and governance, and operational glue to hold it all together. With more than 300% year-on-year usage growth, Delta Sharing is rapidly becoming the default mechanism for open data sharing in the Databricks ecosystem. But to-date it has primarily been used to share data across cloud analytics platforms, leaving troves of high value, high volume on-premises data untapped.

The result is progress that still carries operational drag. Sharing is possible, but it is not yet fundamental.

Protocols unlock leverage. Architecture determines whether that leverage compounds. Unlocking Delta Sharing in these environments requires an object store with strict Amazon S3 compatibility. That foundation already exists in AIStor and is proven at enterprise scale.

What’s Actually New with AIStor Table Sharing

AIStor Table Sharing changes where sharing lives.

Rather than bolting Delta Sharing on as a separate service, MinIO has embedded it directly into AIStor. In fact, AIStor is the first on-premises object store to embed Delta Sharing directly into the storage layer. Sharing becomes an inherent capability of the system that already stores and governs the data.

There is no separate sharing server to deploy, no parallel governance plane to manage, and no export or replication layer to maintain. Organizations can securely share live tables in place, across clouds, platforms, and teams.

For the first time, enterprises can make live, system-of-record data stored in AIStor directly available to downstream cloud analytics and AI platforms, including Databricks, without duplicating data or introducing additional infrastructure. For Databricks customers, this means their cloud analytics environment can directly access governed, on-premises data without replication or delay.

This is not an integration. It is an architectural consolidation that establishes a unified, S3- and Delta-compatible data sharing plane.

A Simple Mental Model

At a high level, AIStor remains the system of record where data is created, stored, and managed. Table Sharing, via the native Delta Sharing protocol, provides controlled, read-only access to that data for Databricks and other downstream analytics platforms. Compute runs where it makes sense. The data does not move.

Tables are shared, not copied. This shift eliminates an entire class of pipelines and staging layers that exist solely to move data from one place to another.

Why This Matters to Business Leaders

This is not a technical upgrade. It is a business unlock.

Speed increases without chaos. Data is available immediately, not after staging. Teams use the tools they already trust. Platform teams stop acting as brokers and start acting as enablers.

Risk and governance become simpler, not heavier. Read-only access is enforced where the data lives. Fewer copies mean fewer compliance exposures. Approval cycles shorten because the model is easier to reason about. Security teams move from reviewing exceptions to enabling scale.

Cost and financial control improve immediately. Duplicate storage disappears. Continuous sync jobs go away. Cloud transfer and egress costs shrink. Fewer systems need to be deployed, secured, and operated. The cost curve of analytics and AI flattens rather than compounds.

AIStor Table Sharing turns data access into a controlled, repeatable capability rather than a series of one-off projects. The implications go beyond any single workload.

On-premises data becomes first-class in AI strategy. Hybrid analytics stops being a special case that requires bespoke pipelines and approvals. Data platforms shift from cost centers to leverage points. Leaders can greenlight AI initiatives without implicitly approving another wave of data duplication.

For regulated industries, high-volume data producers, and global enterprises, this shift is especially material. It accelerates modern analytics and AI without surrendering control, increasing risk, or inflating cost.

Why Now

The timing is not accidental.

Hybrid environments are the steady state. AI workloads demand fresher data, not snapshots. Open table formats such as Apache Iceberg™ and Delta are now enterprise standards. Cloud cost scrutiny is increasing, not decreasing.

Architectures built on data movement cannot survive these pressures. The question is no longer whether data sharing matters, but where it belongs. Together, AIStor and Databricks establish a clear pattern for hybrid AI and analytics. Cloud compute. On-premises data. No copies.

What Leaders Should Do Next

This is not a request for a technical evaluation. It is a strategic question.

Where in your organization is data being copied simply to make analytics possible? What does that duplication cost, financially and operationally? And does your current approach scale as AI adoption accelerates?

AIStor Table Sharing offers a different answer—one that treats sharing as a native capability of the data foundation and enables hybrid AI and analytics without the tax of data copies.

When sharing is embedded into the data foundation, cloud analytics scales without compromise. What once required custom pipelines and special approvals becomes a repeatable enterprise capability.

AIStor Table Sharing enables organizations to accelerate and scale insights without scaling complexity, risk, or cost. And that is what modern data leadership now demands.

Try it now. Explore the technical documentation, or request an AIStor license to evaluate how this model fits your environment.