Benchmarking Vector Index Creation with MinIO AIStor, Milvus, and NVIDIA cuVS

12X Faster with AIStor accelerated with NVIDIA GPUDirect RDMA for S3-compatible storage

Introduction

As vector databases grow to billions of embeddings, index creation becomes a resource-intensive operation that strains storage, compute, and networking resources. A vector database index file (or object) is a persistent data structure that allows queries to be executed without scanning the full database. While query performance often receives the most attention, the time and infrastructure required to build and rebuild an index directly affect operational agility, cluster sizing, and total cost of ownership. In large-scale deployments, index construction is not an occasional task; it is a recurring workload that requires more resources as data grows.

Since creating a vector index is computationally demanding, GPUs, through libraries such as the NVIDIA cuVS library, can provide substantial compute advantages over CPUs. At scale, however, compute is only part of the equation. Before index construction can begin, vector data must be read from durable storage into memory. Read latency dictates when the indexing phase can begin and how efficiently GPUs are utilized. Finally, network transport also plays a role, particularly in distributed deployments. Traditional TCP/IP networking introduces kernel overhead and additional latency in data movement between storage and compute nodes. RDMA-enabled networking reduces CPU involvement during data transfer and can provide lower latency and much higher throughput.

Taken together, GPU acceleration, high-performance networking, and high-throughput object storage form the foundation for efficient vector index construction. Evaluating index build performance, therefore, requires examining the interaction among these components rather than considering compute in isolation.

This benchmark evaluates vector index creation performance using MinIO AIStor as the object storage layer and Milvus as the vector database. Index creation on a CPU is compared with index creation on a GPU using the NVIDIA cuVS library. Also, the impact of TCP/IP vs. RDMA on the index creation pipeline is investigated.

The Index Creation Pipeline

Before diving into the benchmark and the metrics we measured, it is helpful to understand the end-to-end index-creation pipeline and how data flows through it. The pipeline phases for transforming a corpus of unstructured data into a vector database are illustrated below. Based on this graphic, you can see the importance of object storage for both scalability and performance. Custom corpora and vector database index files can grow rapidly as an organization adopts generative and agentic AI. Also, creating the index requires low-latency, high-bandwidth access to the segment objects.

A more detailed description of each phase and how we implemented them is provided below.

- Embedding: The first phase is the embedding phase. Documents are split into chunks, each of which is passed to an embedding model running in an embedding service, and a vector is generated for each chunk. This benchmark used the MIRACL (Multilingual Information Retrieval Across a Continuum of Languages) dataset. Text embedding was performed using the nv-embedqa-e5-v5 embedding model running on the NVIDIA NIM microservices. In total, 106 million 2048-dimensional vectors were created.

- Segmentation: Instead of sending all vectors from an embedding run to the vector database at once or individually, they are grouped together in smaller, more manageable groups, known as segments. Once a segment reaches a specified size, it is sent to AIStor as a single object. For our benchmark, each segment contained 120K vectors, resulting in a segment object of approximately 1 GB. This technique keeps the pipeline flowing without becoming too chatty or getting bogged down by large batches of vectors.

- Ingestion: Once the segment objects are stored in AIStor, they are ready for indexing. However, before they can be indexed, they must be ingested into Milvus. This is an S3 GET operation. This transfer can be done via TCP or RDMA, and the destination is either CPU memory or GPU memory.

- Indexing: Once the segment objects are ingested into Milvus’ memory, they can be indexed. This is a computationally intensive operation. Today, this is typically done on a CPU; however, using the NVIDIA cuVS library, indexing can be performed on a GPU.

Hardware Specifications

The benchmark was run in MinIO's hardware lab in conjunction with NVIDIA technology. To run the services required by the index-creation pipeline, an AIStor cluster and a GPU server were utilized. The AIStor cluster and the GPU server, along with the services that ran on them, are described below.

AIStor Cluster

We used a cluster composed of eight PowerEdge R7615 servers to run AIStor. The AIStor cluster is the critical hardware component for embedding (GETs from the embedding service), segmentation (PUTs from the CPU server running the segmentation services), and ingestion (GETs from the GPU server). Also note that the NVIDIA ConnectX7 NIC supports both TCP and RDMA.

AIStor Cluster (8x PowerEdge R7615)

- 1x AMD EPYC 9754 128-Core Processor

- 512 GB RAM

- 24x NVMe U.2 PCIe Gen 5/7.68 TB

- NVIDIA ConnectX-7 NIC

GPU Server

To run Milvus and cuVS, a PowerEdge XE9680 GPU server was utilized. The specifications of this GPU server are shown below. The segmentation services and the embedding services (including NVIDIA NIM) were also run on this server.

Milvus/cuVS Server (1x PowerEdge XE9680)

- 2x Intel Xeon Platinum 8592 (64C/128T)

- 8x NVIDIA Hopper GPUs

- 2048 GB RAM

- 2x NVIDIA ConnectX-7 NIC

Switch

An NVIDIA Spectrum SN4700 400 GbE Spine/ToR Ethernet Switch was used for networking. The NVIDIA SN4700 provides up to 400 GbE per port and a total switching capacity of 12.8 Tb/s.

The Benchmark

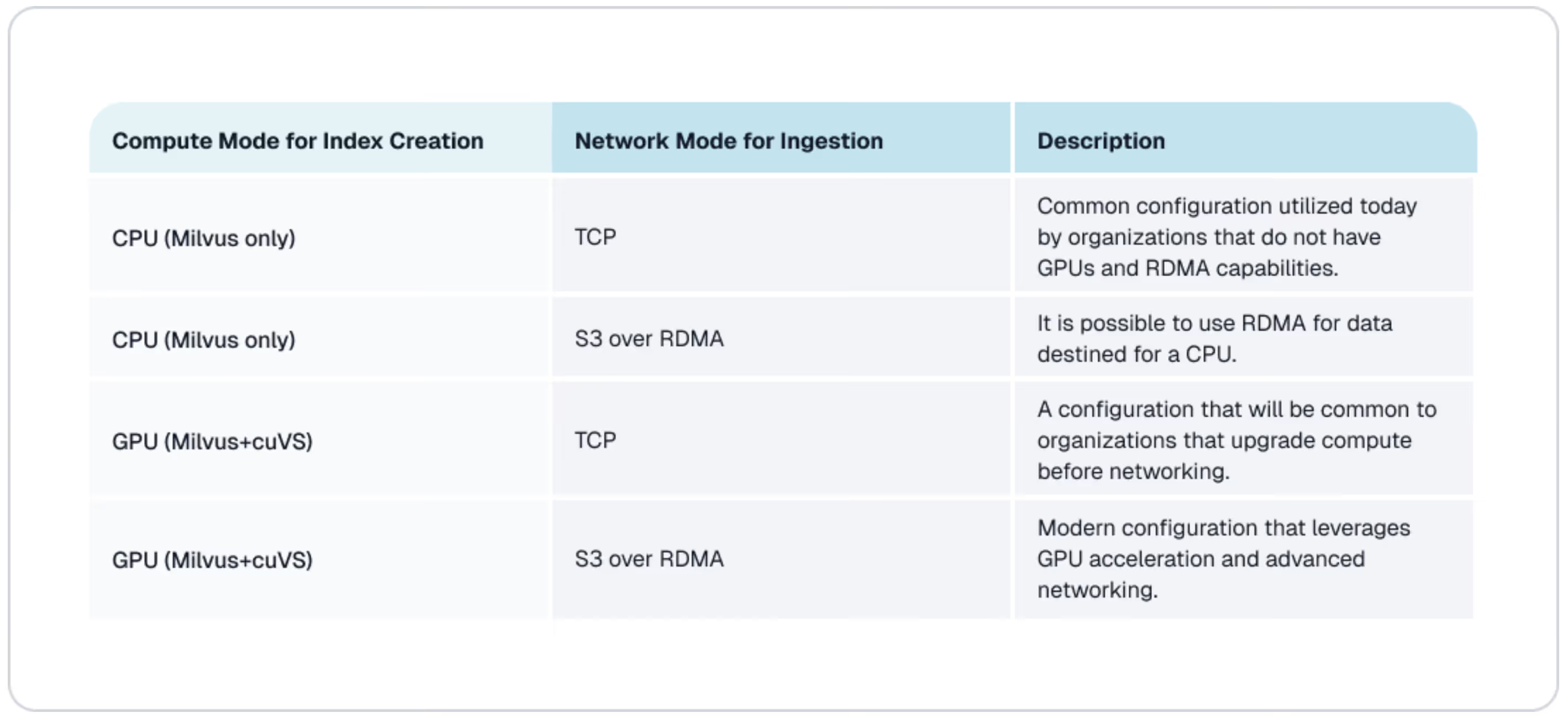

The index creation pipeline was run across the four configurations shown in the table below. By testing combinations of compute and networking, the benchmark aimed to isolate the relative impact of compute acceleration and network transport on index build time. The benchmark also aimed to show what is possible when both compute and networking are upgraded to H200 GPUs and NVIDIA GPUDirect RDMA for S3-compatible storage, respectively.

What we measured

For each experiment described above, the metrics described below were captured.

Embedding Time - The performance of the embedding phase was not measured in this benchmark. However, having a performant embedding phase is important for running efficient end-to-end tests.

Segmentation PUT time to AIStor (S3) - This is the total PUT time for all segment objects. It is important to note that the embedding phase used multiple embedding services, and the components that created the segmented objects ran in multiple threads. Therefore, this phase puts a parallel load on AIStor. This measurement did not include the wait time as segment objects reached their specified size before the PUT operation began.

Ingestion GET time to Milvus (S3) - The total GET time to Milvus memory for all segment objects.

Index Time - The aggregate computation time needed to create an index for each segment object and write it back to AIStor.

The Results

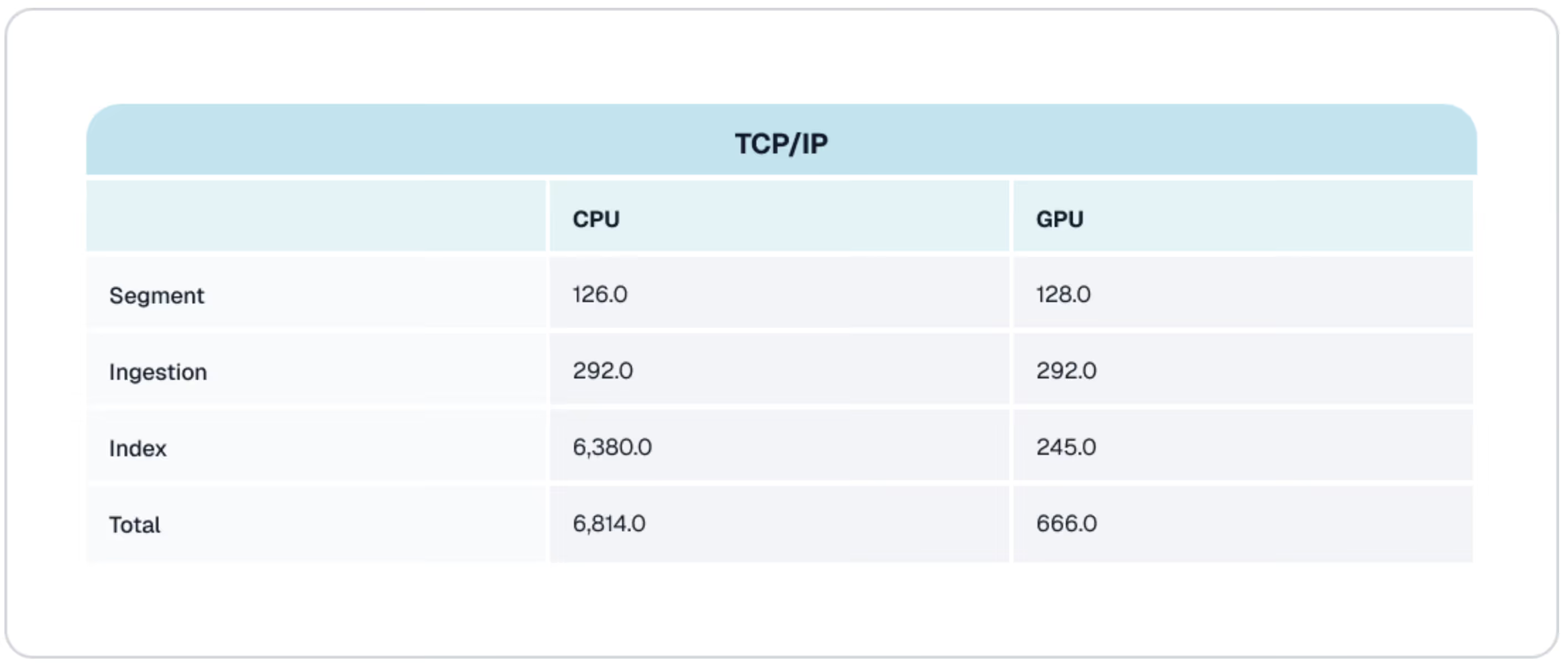

Each experiment described previously was run four times, and the average for each metric is shown in the tables below. The first table shows results for CPU vs. GPU using TCP/IP as the network protocol. This second table shows the results when NVIDIA GPUDirect RDMA for S3-compatible storage was enabled.

We can better understand the impact of each variable in this test by graphing the data. Let’s start by looking at the impact of GPUs.

CPUs vs. GPUs

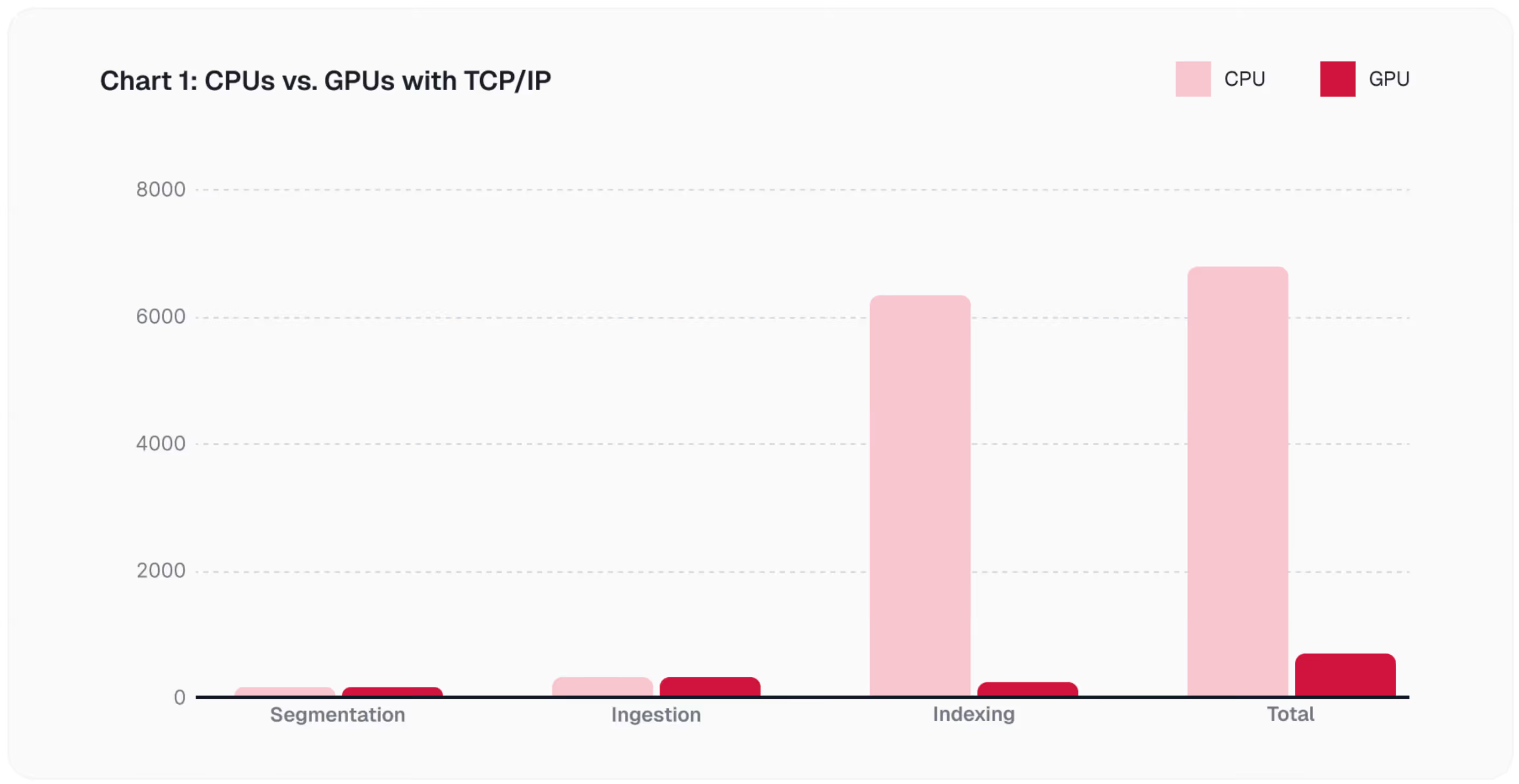

The bar chart below compares CPU and GPU performance at each stage of the index-creation pipeline when TCP/IP is used. This is the scenario an organization will be forced into if it upgrades compute to GPUs but does not have a storage solution capable of RDMA.

Since segmentation and ingestion are IO-bound phases and run on the CPU, there is no notable improvement. However, GPUs have a substantial impact on the indexing phase. On a CPU, this phase took almost two hours; on a GPU, creating an index took a little over 4 minutes.

Next, let’s look at how RDMA improves the performance of this pipeline.

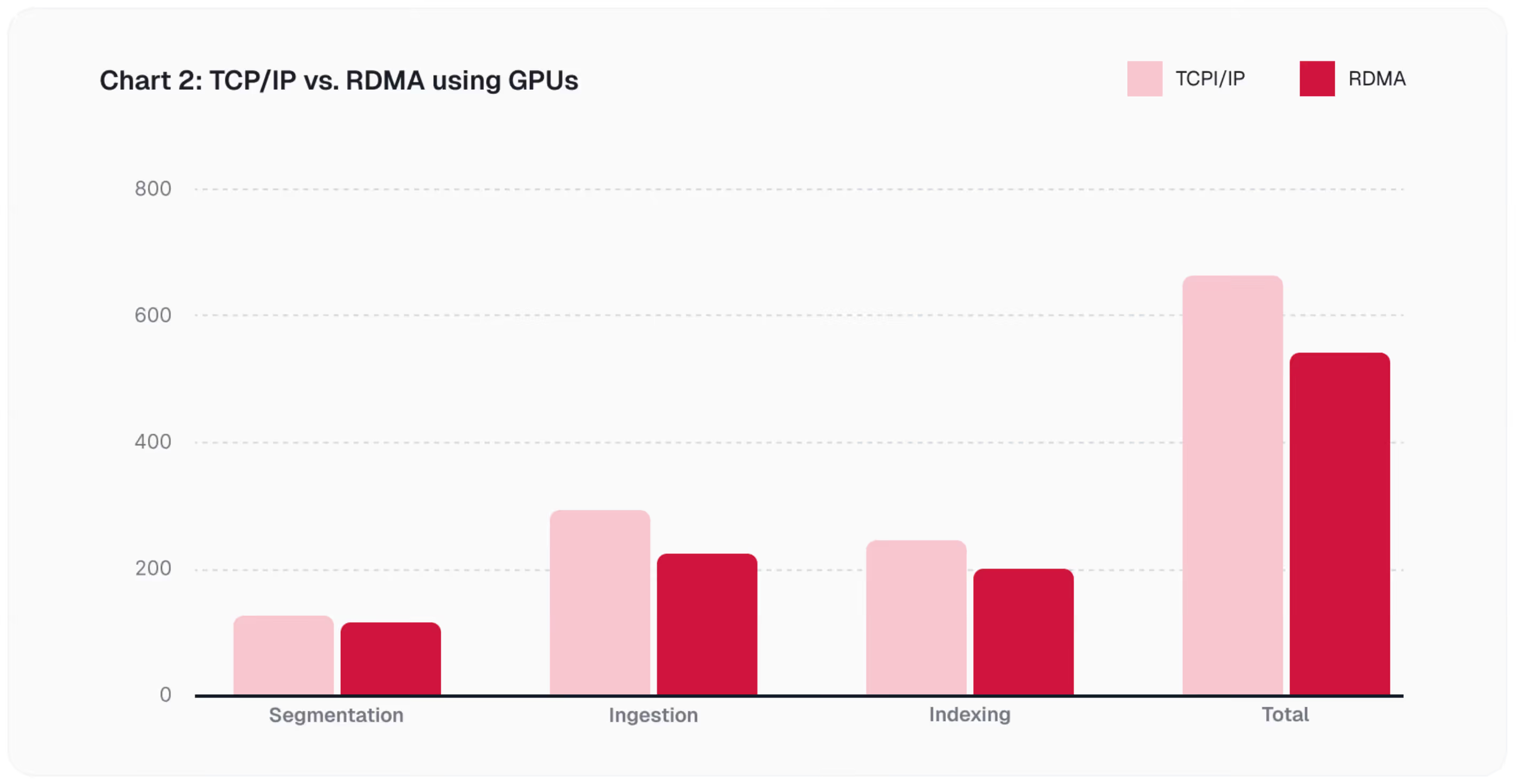

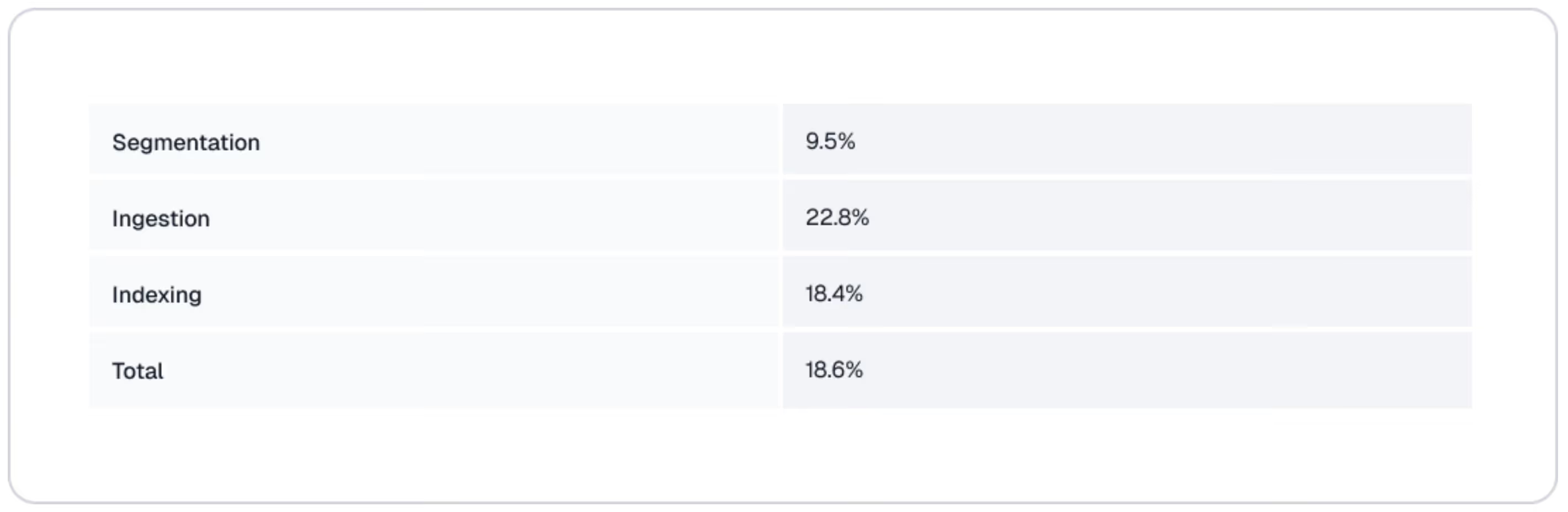

TCP/IP vs. RDMA

Improving your network’s capabilities improves the IO performance of the index creation pipeline. Segmentation and ingestion are IO-intensive. However, indexing also has an IO component. Remember, once the index is created, it needs to be written back to the vector database, which, in this benchmark, is Milvus using AIStor as the underlying storage solution. The chart below visualizes the improvement RDMA provides in these phases when a GPU is used for indexing. This is the scenario organizations will enjoy when they adopt GPUs and use AIStor as the object store backing a vector database.

These performance benefits will not save you hours as the GPUs will, but when you improve network performance, you can improve the performance of every phase of your pipeline, and this adds up. The table below shows the percent improvement by phase as well as the total percent improvement across the entire pipeline, which is 18.6%.

Conclusion

The results of this benchmark reinforce the fact that vector index creation is an end-to-end system workload rather than a purely computational task. GPU acceleration with NVIDIA cuVS can significantly reduce the time required for the compute-intensive phases of index construction, particularly for large datasets. However, the overall improvement depends on the system’s ability to deliver data to the indexing process at sufficient speed.

The much lower latency of RDMA as compared to Ethernet cannot be overstated. It is architecturally important: you can easily improve throughput by scaling out storage and networking resources, but latency is a whole different story - it is an inherent feature of the technology you chose to build your infrastructure with.

AIStor’s use of NVIDIA GPUDirect RDMA for S3-compatible storage significantly reduces networking overhead and improves data movement across the pipeline compared to TCP. As dataset sizes increase, balanced performance across storage, network, and compute becomes more important than optimizing any single layer. For teams managing large-scale vector databases, index build performance is best addressed as a coordinated infrastructure problem rather than an isolated compute optimization task.

Resources

Press Release: MinIO AIStor Brings Object Data Stores for the NVIDIA STX Reference Architecture

Whitepaper: MinIO AIStor: The Unified Data Foundation for the NVIDIA AI Factory

Blog: AIStor Runs Natively Inside NVIDIA BlueField-4: Object Data at Wire Speed